My Backup Framework

The 3-2-1 rule for backup means that you should have three copies of your data, the original data plus 2 backups. One of those backups should be off-site.

The idea is to protect yourself from a single point of failure. If one or two of those copies disappears, no problem. You're covered.

“An ounce of prevention is worth a pound of cure.” - Benjamin Franklin

A Recommended Solution

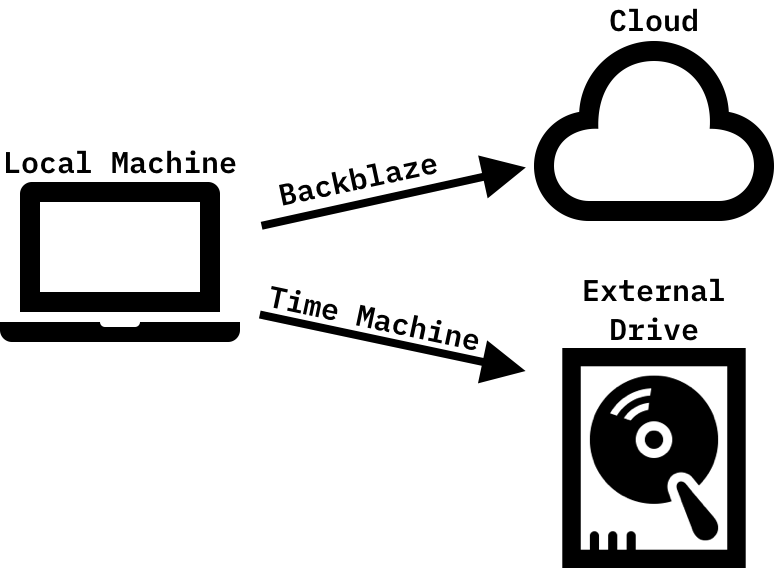

For Mac users, a simple 3-2-1 backup solution might look like this:

Three copies of the data: the original data on the local machine, a local backup on an external drive created using Time Machine, and another backup in the cloud using Backblaze's unlimited personal backup.

It's easy. So easy, in fact, that this is the backup solution I recommend to most people.

The process is mostly automated. The user just needs to remember to connect to the external hard drive semi-regularly. Time Machine and Backblaze handle the rest.

My Solution

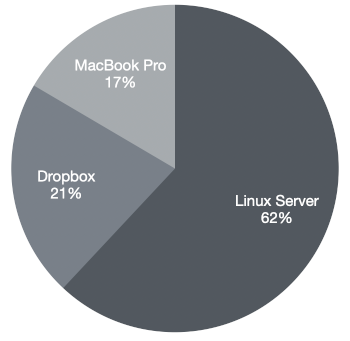

I recommend the above solution to most people, but I don't use it myself because it assumes that all of your data lives on local machine that can be backed up with Time Machine and Backblaze. This isn't true for me. In fact, the data on my local machine only represents only 17% of my data footprint. The other 83% is spread across my server and Dropbox.

Unfortunately, neither Time Machine nor Backblaze's unlimited personal backup work well with the remote data. They'd both prefer that it live on my local machine.

Fortunately I discovered a command-line utility called Rclone that makes it easy to back up those pesky remote files.

With a simple command, I can copy all of the files in my Dropbox to an external drive without syncing them to my local machine first.

rclone copy -P dropbox: /mnt/backup/dropbox

A few notes:

- I use

copyinstead ofsyncto protect myself from accidental deletions. Copy won't delete any files on the destination. dropboxis the name of a 'remote' that I created to connect to Dropbox. A remote is a connection to a storage system. Rclone supports dozens of different storage systems, from cloud storage providers like Dropbox and AWS S3 to SFTP and HTTP.- The

-Pflag is unnecessary. It just shows the real-time progress of the transfer.

Then, once I've backed up to the external drive, I use Rclone to back it up to Google Drive, where I have 5TB of storage thanks to Google Workspaces (formerly G Suite for Business).

rclone copy -P /mnt/backup encrypted_gdrive:backup

Rclone allows data to be encrypted before it is sent to the destination. Encryption is handled by creating a 'crypt' remote on top of another remote. It sounds complicated, but it's not. Once it's configured, an encrypted remote works the same as any other remote. Rclone has an excellent page on how to set it up.

Rclone's flexibility means that I can handle all of my backup needs with a simple script, triggered to run daily using a cron job on the server. Here's that script:

rclone copy -P mbp:/Users/andrew /mnt/backup/macbook # Copy my user folder on my MacBook to the backup drive via SFTP

rclone copy -P /home/andrew /mnt/backup/server # Copy my user folder on my server

rclone copy -P dropbox: /mnt/backup/dropbox # Copy remote Dropbox files

rclone copy -P /mnt/backup encrypted_gdrive:backup # Copy an encrypted version of the data on the backup drive to Google Drive

That's all there is to it :)